You recall that in my last post, I went through an involved process of showing how one could generate storm losses for individuals over years. That process, which underlies a project to examine the effect of legal change on the sustainability of a catastrophe insurer, involved the copulas of beta distributions and a parameter mixture distribution in which the underlying distribution was also a beta distribution. It was not for the faint of heart.

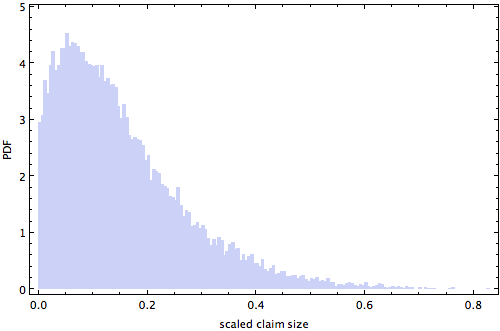

One purpose of this effort was to generate a histogram that looks like the one below that shows the distribution of scaled claim sizes for non-negligible claims. This histogram was obtained by taking one draw from the copula distribution for each of the [latex]y[/latex] years in the simulation and using it to constrain the distribution of losses suffered by each of the [latex]n[/latex] policyholders in each of those [latex]y[/latex] years. Thus, although the underlying process created an [latex]y \times n[/latex] matrix, the histogram below is for a single “flattened” [latex]y \times n[/latex] vector of values.

But, if we stare at that histogram for a while, we recognize the possibility that it might be approximated by a simple statistical distribution. If that were the case, we could simply use the simple statistical distribution rather than the elaborate process for generating individual storm loss distributions. In other words, there might be a computational shortcut that could approximate the elaborate proces. If that were the case, to get the experience of all [latex]n[/latex] policyholders — including those who did not have a claim at all — we could just upsample random variates drawn from our hypothesized simple distribution and add zeros; alternatively, we could create a mixture distribution in which most of the time one drew from a distribution that was always zero and, when there was a positive claim, one drew from this hypothesized simple distribution.